![]() Stypendium dla olimpijczyków

Stypendium dla olimpijczykówJesteś laureatem lub finalistą olimpiady przedmiotowej? Sprawdź jak uzyskać stypendium!

![]()

![]() Z okazji 30-lecia wydziału

Z okazji 30-lecia wydziału22 czerwca 2024 r. serdecznie zapraszamy na zjazd absolwentów

![]()

Wiadomości

Wydarzenia

-

![Wykład nr 20: Metody analizy nieliniowej w wybranych zagadnieniach zagospodarowania przestrzennego i planowania transportu]()

Wykład nr 20: Metody analizy nieliniowej w wybranych zagadnieniach zagospodarowania przestrzennego i planowania transportu

-

![Ogólnopolska Konferencja Studentów Matematyki Θβℓιcℤε]()

Ogólnopolska Konferencja Studentów Matematyki Θβℓιcℤε

-

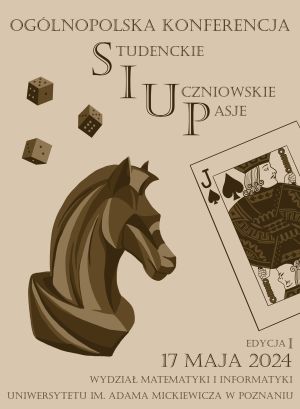

![Ogólnopolska Konferencja SiUP – Studenckie i Uczniowskie Pasje edycja I]()

Ogólnopolska Konferencja SiUP – Studenckie i Uczniowskie Pasje edycja I

-

![Wykład z informatyki im. Mariana Rejewskiego, Jerzego Różyckiego, Henryka Zygalskiego 2024]()

Wykład z informatyki im. Mariana Rejewskiego, Jerzego Różyckiego, Henryka Zygalskiego 2024

-

![Absolutorium 2024]()

Absolutorium 2024

O wydziale

Jako jednostka uczelni badawczej, Wydział Matematyki i Informatyki UAM w Poznaniu kontynuuje ponad 100-letnią tradycję poznańskiej matematyki. Jest też jednym z najlepszych ośrodków badawczo-dydaktycznych w zakresie informatyki w Polsce. Obecnie Wydział prowadzi studia na czterech kierunkach: matematyce, informatyce, analizie i przetwarzaniu danych oraz na nauczaniu matematyki i informatyki. Ostatni z wymienionych kierunków stanowi ofertę wyjątkową w skali całego kraju. W ofercie Wydziału można także znaleźć studia podyplomowe.

- 4 kierunki studiów

- 1700+ studentów

- 6000+ absolwentów

Studia I stopnia

-

![undefined]()

Matematyka

Fascynuje Cię królowa nauk? Jesteś umysłem ścisłym? Chcesz studiować matematykę na wiodącej uczelni w Polsce? Wybierz studia na kierunku Matematyka i wykorzystaj potencjał ten dziedziny! Matematyka stanowi ważny element kultury i działalności człowieka, a także rozwija zdolności krytycznego myślenia. Przez 3 lata zdobędziesz wiedzę i umiejętności z zakresu określonych zagadnień z matematyki. Te studia to przygoda pełna intelektualnych wyzwań!

-

![undefined]()

Informatyka

Ekspert w sektorze IT może liczyć na szeroki wybór dobrze płatnych ofert pracy, obejmujących wiele obszarów nauki i biznesu. Jeśli zatem chcesz poszerzyć swoje umiejętności analitycznego myślenia i nie boisz się technologii, to mamy coś dla Ciebie! Przygotowaliśmy 3,5–letnie studia inżynierskie na kierunku Informatyka, dzięki którym zdobędziesz gruntowną wiedzę z zakresu informatyki i matematyki. W zależności od swoich zainteresowań możesz wybrać jedną z czterech ścieżek tematycznych: Sztuczna inteligencja, Projektowanie algorytmów, Cyberbezpieczeństwo i Programowanie gier komputerowych. Współpracujemy z wieloma firmami z sektora IT, więc na naszych studiach poznasz także praktyczne zastosowania nowoczesnych technologii, co pozwoli Ci się odnaleźć na różnorodnym i ciągle zmieniającym się rynku pracy.

-

![undefined]()

Informatyka kwantowa

Informatyka kwantowa to interdyscyplinarne studia, łączące w sobie obszary wiedzy z zakresu fizyki, informatyki i matematyki. Razem z Wydziałem Fizyki UAM oraz firmą IBM przygotowaliśmy 3,5–letnie (7 semestrów) studia inżynierskie, podczas których będziesz samodzielnie tworzyć i uruchamiać programy na komputerach i symulatorach kwantowych. Dynamiczny postęp techniczny i olbrzymie inwestycje w rozwój technologii kwantowych powodują, że liczba miejsc pracy przybywa szybciej niż liczba ekspertów!

-

![undefined]()

Nauczanie matematyki i informatyki

Z myślą o osobach, które chcą połączyć swoją wiedzę z zakresu matematyki i informatyki z pasją do prowadzenia zajęć dydaktycznych uruchomiliśmy unikatowy kierunek studiów! Na kierunku Nauczanie matematyki i informatyki kształcimy przyszłych nauczycieli tych dwóch przedmiotów szkolnych. W trakcie trzech lat studiów zdobędziesz ugruntowaną wiedzę z matematyki i informatyki, niezbędną do nauczania tych przedmiotów. Wyposażymy Cię w kompetencje zawodowe i osobiste, dzięki którym sprostasz wyzwaniom, przed jakimi staje współczesna edukacja. Wybierz kierunek Nauczanie matematyki i informatyki by edukować kolejne pokolenia!

-

![undefined]()

Nauczanie matematyki i informatyki

Z myślą o osobach, które chcą połączyć swoją wiedzę z zakresu matematyki i informatyki z pasją do prowadzenia zajęć dydaktycznych uruchomiliśmy unikatowy kierunek studiów! Na kierunku Nauczanie matematyki i informatyki kształcimy przyszłych nauczycieli tych dwóch przedmiotów szkolnych. W trakcie trzech lat studiów zdobędziesz ugruntowaną wiedzę z matematyki i informatyki, niezbędną do nauczania tych przedmiotów. Wyposażymy Cię w kompetencje zawodowe i osobiste, dzięki którym sprostasz wyzwaniom, przed jakimi staje współczesna edukacja. Wybierz kierunek Nauczanie matematyki i informatyki by edukować kolejne pokolenia!

-

![undefined]()

Nauczanie matematyki i informatyki

Z myślą o osobach, które chcą połączyć swoją wiedzę z zakresu matematyki i informatyki z pasją do prowadzenia zajęć dydaktycznych uruchomiliśmy unikatowy kierunek studiów! Na kierunku Nauczanie matematyki i informatyki kształcimy przyszłych nauczycieli tych dwóch przedmiotów szkolnych. W trakcie trzech lat studiów zdobędziesz ugruntowaną wiedzę z matematyki i informatyki, niezbędną do nauczania tych przedmiotów. Wyposażymy Cię w kompetencje zawodowe i osobiste, dzięki którym sprostasz wyzwaniom, przed jakimi staje współczesna edukacja. Wybierz kierunek Nauczanie matematyki i informatyki by edukować kolejne pokolenia!

Studia II stopnia

-

![undefined]()

Matematyka

Chcesz pogłębić swoją wiedzę z zakresu matematyki? Planujesz kontynuować studia i wybrać kierunek pełen wzywań? Wybierz studia II stopnia na kierunku Matematyka! Stworzyliśmy dla Ciebie 2-letni program studiów uzupełniających, poszerzających Twoje horyzonty m. in. o umiejętności z zakresu zaawansowanych metod matematycznych. Przygotujemy Cię także do rozpoczęcia kariery naukowej w ramach studiów doktoranckich.

-

![undefined]()

Informatyka

Kontynuuj studia na kierunku Informatyka i wybierz jedną z dwóch innowacyjnych specjalności realizowanych w ramach projektu AI Tech (studia stacjonarne). Od marca 2021 r. ruszy innowacyjny program kształcenia na kierunku Informatyka, realizowany we współpracy z czołowymi uczelniami oferującymi studia na kierunku Informatyka. Jako uczestnik projektu AI Tech będziesz mógł wziąć udział w stażach krajowych i zagranicznych, wyjazdach studyjnych i szkołach letnich.

-

![undefined]()

Nauczanie matematyki i informatyki

Kontynuuj swoją edukację na kierunku Nauczanie matematyki i informatyki i zdobądź uprawnienia do wykonywania zawodu nauczyciela. Jako jedyni w Polsce oferujemy studia, które pozwolą Ci edukować w zakresie matematyki i informatyki na wszystkich szczeblach edukacji. Podczas dwóch lat studiów wyposażymy Cię w kompetencje zawodowe i osobiste, dzięki którym sprostasz współczesnym wyzwaniom w edukacji. W czasie studiów będziesz miał stały dostęp do nowoczesnego sprzętu wysokiej klasy. Wybierz kierunek nauczanie matematyki i informatyki by edukować kolejne pokolenia!

-

![undefined]()

Analiza i przetwarzanie danych

Każdego dnia wyniki analiz i wizualizacji danych przekładają się na sukcesy zawodowe, biznesowe oraz społeczne. Z myślą o osobach zainteresowanych pogłębieniem swojej wiedzy z zakresu analizy i przetwarzania danych uruchomiliśmy 2-letnie studia magisterskie. Jako absolwent kierunku Analiza i przetwarzanie danych zostaniesz pożądanym na rynku pracy specjalistą, wyróżniającym się merytoryczną wiedzą i doświadczeniem praktycznym.

-

![undefined]()

Analiza i przetwarzanie danych

Każdego dnia wyniki analiz i wizualizacji danych przekładają się na sukcesy zawodowe, biznesowe oraz społeczne. Z myślą o osobach zainteresowanych pogłębieniem swojej wiedzy z zakresu analizy i przetwarzania danych uruchomiliśmy 2-letnie studia magisterskie. Jako absolwent kierunku Analiza i przetwarzanie danych zostaniesz pożądanym na rynku pracy specjalistą, wyróżniającym się merytoryczną wiedzą i doświadczeniem praktycznym.

-

![undefined]()

Analiza i przetwarzanie danych

Każdego dnia wyniki analiz i wizualizacji danych przekładają się na sukcesy zawodowe, biznesowe oraz społeczne. Z myślą o osobach zainteresowanych pogłębieniem swojej wiedzy z zakresu analizy i przetwarzania danych uruchomiliśmy 2-letnie studia magisterskie. Jako absolwent kierunku Analiza i przetwarzanie danych zostaniesz pożądanym na rynku pracy specjalistą, wyróżniającym się merytoryczną wiedzą i doświadczeniem praktycznym.